Understanding AI Compliance: What it Means and How to Get Started

The last two years changed everything about how companies need to think about AI compliance. What used to be mostly voluntary guidelines and best practices turned into actual law, and it happened fast. Between 2024 and 2025 alone, over 1,080 AI-related bills were introduced across US states, and 186 of them passed. That’s not counting major international regulatory frameworks like the EU AI Act, NIST AI Risk Management Framework (NIST AI RMF), and ISO 42001 that organizations use for compliance.

You can see the impact in how IT leaders are responding. Gartner’s 2025 survey found that over 70% say compliance is one of their biggest problems when they’re trying to deploy AI tools. The harder part is that only 23% feel like they actually have a handle on the governance side. So most companies are in this spot where they know they need to comply with something, they’re just not sure they’re doing it right.

If you’re trying to figure out where to start with AI compliance, we’ve covered everything from what the regulations require to an exact action plan you can follow. But before anything, let’s start with what AI compliance actually means.

What is AI compliance?

AI compliance is the operational discipline of ensuring your AI systems meet legal and ethical requirements throughout their lifecycle. What we’re really talking about here is risk management at scale. When you deploy AI, you’re putting systems in place that make important decisions, often without much human checking. Compliance means you’ve built the right safeguards around that.

What makes AI compliance different from traditional compliance is that AI systems learn and evolve. A compliant system today can drift into non-compliance tomorrow as it processes new data or as its predictions change. Traditional compliance is mostly about fixed processes and controls, but AI compliance requires continuous monitoring because the technology itself is dynamic.

Given its dynamic nature, compliance should be part of your ongoing AI operations; you’re exposed to legal and reputational risks you won’t see coming.

Key aspects of AI compliance

AI compliance has four core dimensions that organizations must address:

1. Regulatory and legal requirements

Legal compliance is where everything starts. Existing data protection laws like GDPR and CCPA apply to AI systems processing personal information, even though they predate modern AI. AI-specific regulations layer additional requirements based on system risk (more on this in a minute).

2. Technical safeguards

Technical safeguards are the protective features you build directly into your AI systems to meet compliance requirements and prevent harm. The key technical safeguards include:

Explainability tools that let you understand and communicate how your AI reached specific decisions or predictions

Bias testing that checks whether your model treats different demographic groups fairly before and after deployment

Access controls and security that protect your models and data from unauthorized use, tampering, or breaches

Performance tracking that measures whether your AI maintains acceptable accuracy and reliability over time

Input validation that checks incoming data for quality issues, outliers, or attempts to manipulate the system

Many regulations explicitly require these capabilities. If you can’t explain why your AI denied someone a loan or demonstrate fair treatment across protected groups, you have a compliance gap.

3. Governance structures

Governance creates clear accountability and decision-making processes for AI compliance across your organization. It establishes who’s responsible when things go wrong, how decisions about AI deployment get made, and what your organization’s risk tolerance is. This means creating review boards, defining approval processes for high-risk applications, and building escalation paths when issues surface.

Without formal governance, teams may deploy AI without proper oversight because no one has clear authority or responsibility for compliance decisions.

4. Documentation standards

Comprehensive records are your evidence that compliance requirements have been met throughout the AI lifecycle. You need detailed documentation of what data you trained on, how you tested for bias, what decisions your model made and why, who approved deployment, and how you’ve monitored performance. This serves two critical purposes:

When regulators ask questions, your documentation is what stands between a routine inquiry and a serious problem.

Why should your organization care about AI compliance?

AI compliance matters because the financial and operational risks of getting it wrong have become too significant to treat as secondary concerns. All major economies have introduced regulations backed by enforcement mechanisms, creating immediate consequences for organizations that deploy AI without proper safeguards.

For example, the EU AI Act, which began enforcement in February 2025, carries penalties of up to €35 million or 7% of global annual revenue for violations of prohibited AI practices. Beyond the fines themselves, a single compliance issue can lead to scrutiny under data protection laws, sector-specific regulations, and AI-specific frameworks all at once. This compounds both legal exposure and operational disruption.

The cost of fixing these compliance problems after deployment dwarfs the investment required to build it in correctly from the beginning. Add in reputational damage, restricted market access, and the opportunity cost of delayed AI initiatives, and the business case for proactive compliance becomes straightforward.

Which compliance frameworks should teams be monitoring?

The last two years have seen a wave of AI compliance regulations emerge across major economies. Organizations deploying AI now face multiple overlapping compliance obligations depending on where they operate and what their systems do.

Here are the frameworks that matter most:

The EU AI Act

The EU AI Act is the world’s first comprehensive, legally binding AI regulation. The Act has extraterritorial reach, meaning any organization whose AI impacts EU users must comply regardless of where they’re based. Penalties reach up to €35 million or 7% of global annual revenue.

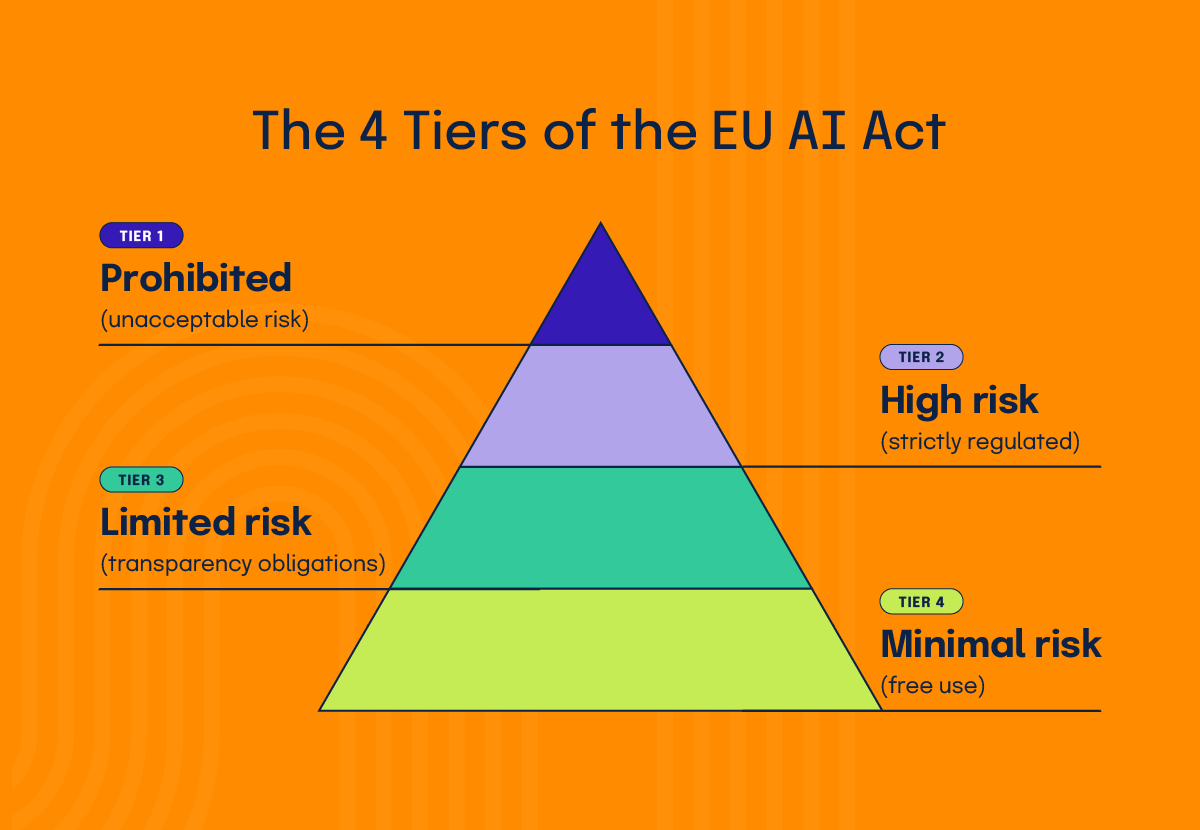

High-risk system obligations are set to become fully enforceable by August 2026. It debuted on August 1, 2024, and enforces compliance through a risk-based approach that categorizes AI systems into four tiers:

Tier 1: Prohibited (unacceptable risk)

AI systems that manipulate behavior, exploit vulnerabilities, enable social scoring, or use real-time biometric identification in public spaces

Tier 2: High risk (strictly regulated)

AI used in critical areas like hiring, credit decisions, law enforcement, or essential services requires conformity assessments and continuous monitoring

Tier 3: Limited risk (transparency obligations)

AI that interacts with humans or generates content (like chatbots and deepfakes) requiring transparency about AI use

Tier 4: Minimal risk

Low-impact AI systems like spam filters or AI-enabled video games with no specific regulatory requirements

NIST AI Risk Management Framework (NIST AI RMF)

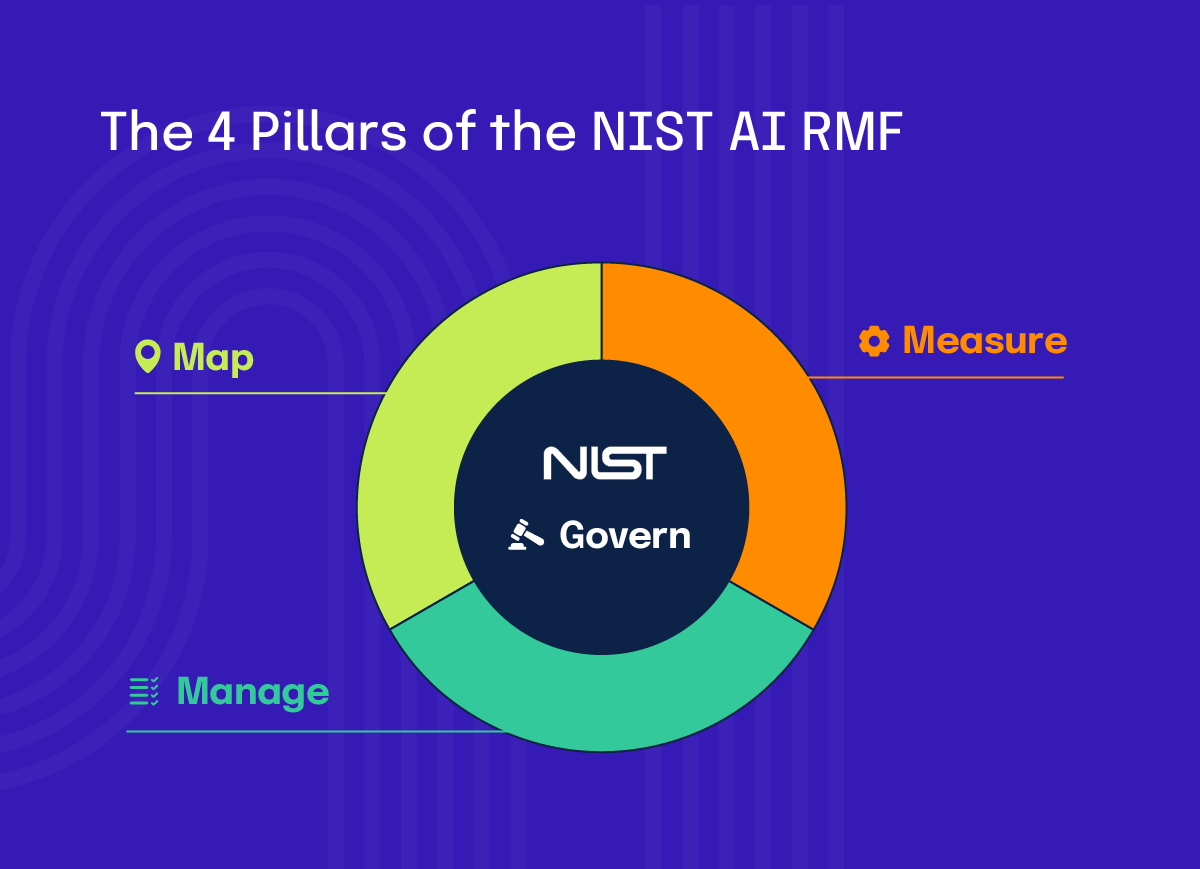

The NIST AI RMF is the United States’ voluntary framework for AI governance, though it’s increasingly required for federal procurement and adopted by regulated industries. While technically voluntary for private organizations, NIST AI RMF has become the de facto standard for demonstrating AI risk management capability in the US market. Unlike the EU AI Act, NIST doesn’t impose penalties, but non-compliance can affect your ability to win government contracts or operate in regulated sectors. Published on January 26, 2023, with a Generative AI Profile added on July 26, 2024, the framework provides a structured approach across four pillars:

Govern

Establish leadership accountability, policies, and organizational structures for AI risk management

Map

Identify the context, categorize risks, and understand the AI system’s purpose and potential impacts

Measure

Assess and analyze identified risks using metrics, testing, and evaluation methods

Manage

Implement controls to mitigate risks and monitor AI systems throughout their lifecycle

ISO 42001

ISO 42001 is the first international standard for AI Management Systems, published in December 2023. It provides a certifiable framework for establishing, implementing, and maintaining responsible AI governance across an organization.

The standard is voluntary but offers third-party certification that validates your AI management practices. ISO 42001 covers governance structures, risk management, transparency, ethical use, and continuous improvement throughout the AI lifecycle. Organizations can pursue full certification through accredited bodies, which involves comprehensive audits similar to ISO 27001 for information security.

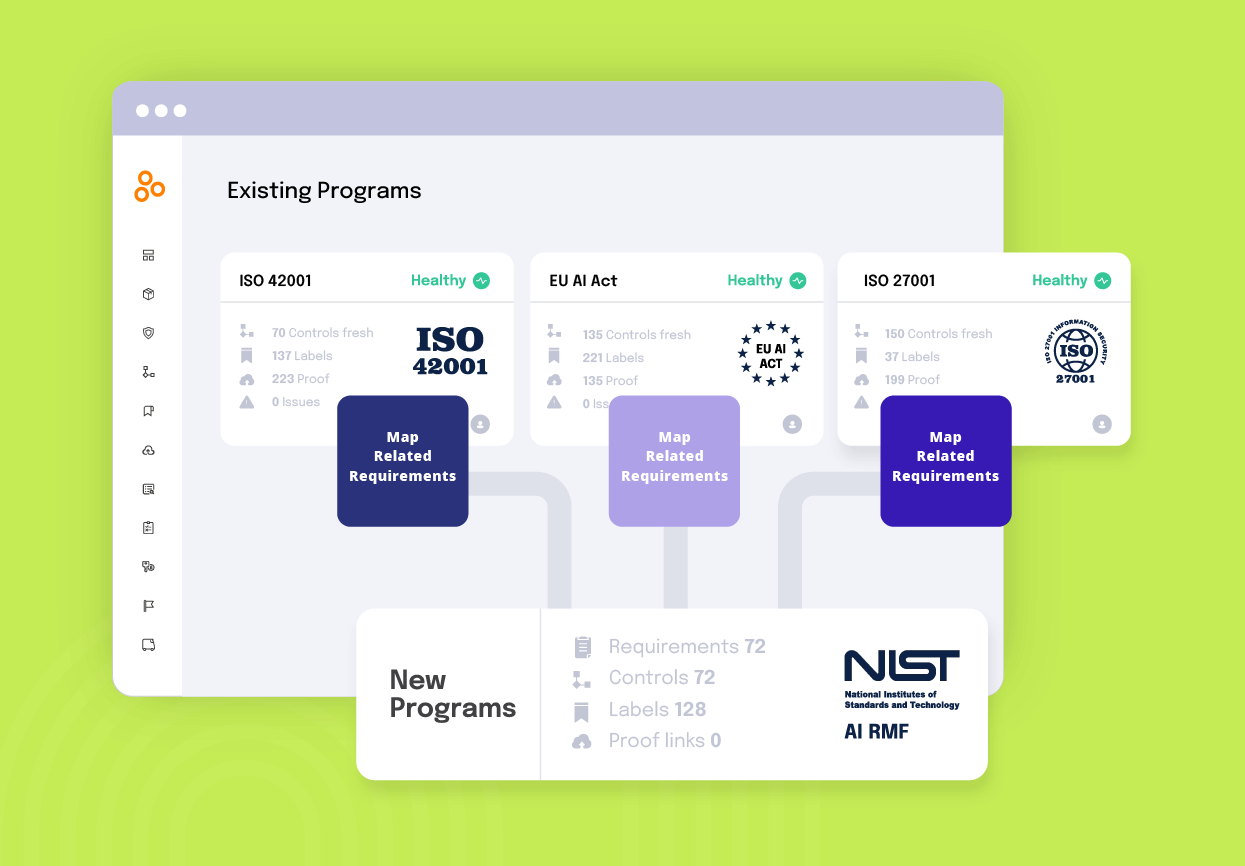

Tools like Hyperproof help organizations implement ISO 42001 without starting from zero. The platform provides templates built around the standard, eliminating much of the setup work. You can reuse controls across multiple compliance frameworks, assign responsibilities, and track completion.

Singapore’s Model AI Governance Framework

Singapore’s Model AI Governance Framework represents one of the most innovative approaches to AI regulation globally. It includes an AI Verify toolkit, a testing and certification system that enables organizations to demonstrate their AI governance practices through objective assessments. This toolkit is increasingly becoming the trust badge for the ASEAN region, with organizations pursuing certification to prove compliance to enterprise buyers and government clients.

OECD AI Principles

The OECD AI Principles represent a global consensus framework developed by the Organisation for Economic Co-operation and Development, adopted by member countries to guide responsible AI development.

Established in 2019 and endorsed by over 44 countries across six continents, these principles provide a foundation for international AI governance without imposing legally binding requirements. The framework has become influential in shaping national AI strategies worldwide, with many countries referencing these principles when developing their own regulations.

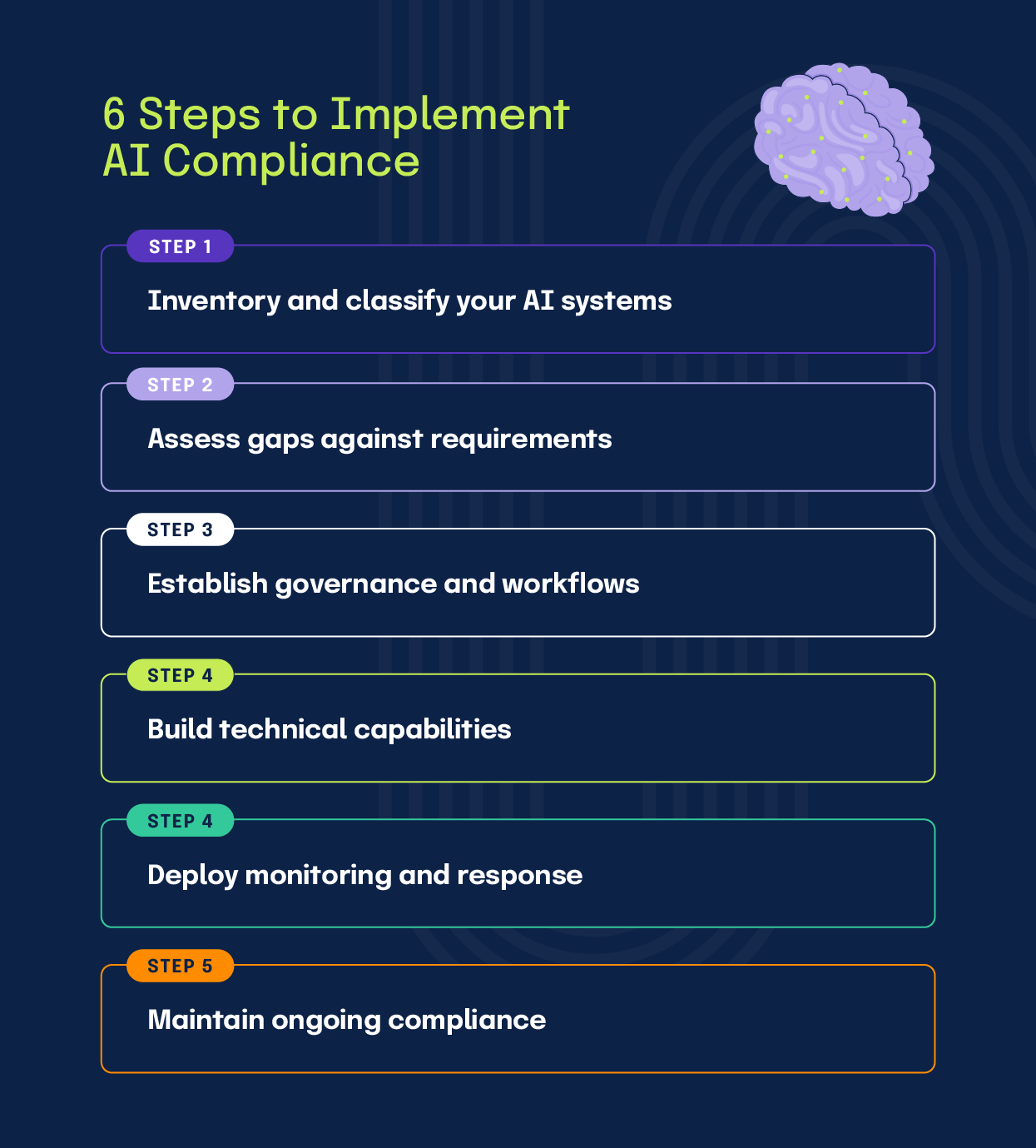

How to implement AI compliance: A 6-step approach to operationalization

The following approach provides a structured path from assessment to continuous operations:

Step 1: Inventory and classify your AI systems

Start by cataloging every AI system your organization develops, deploys, or uses. Document the technical architecture, data flows, geographic deployment, and business purpose for each system. Then classify each system by the regulatory frameworks that apply based on jurisdiction, industry, and use case.

This inventory defines your compliance scope and often reveals exposure you didn’t know existed.

Want your own AI system inventory template? Download it now >

Step 2: Assess gaps against requirements

Take the systems you’ve cataloged and assess whether you can actually meet the compliance obligations you just identified. Some important things to note:

For organizations managing cross-framework complexity, tools like Hyperproof help visualize where controls satisfy multiple requirements, preventing duplicate compliance work across frameworks like NIST AI RMF, ISO 27001, The EU AI Act, and ISO 42001.

Step 3: Establish governance and workflows

The gaps you identified reveal where decision-making authority and processes don’t exist. Start by assigning executive ownership with real authority to allocate compliance resources and approve or block AI system deployments. Build cross-functional teams that bring legal, technical, risk, and product perspectives into AI decisions before systems deploy.

Step 4: Build technical capabilities

With governance in place to make decisions, now build the technical controls those decisions require:

Use the gap analysis from step 2 as your implementation checklist, ensuring you’re building what regulations actually require rather than what seems compliance-adjacent.

Step 5: Deploy monitoring and response

Set up continuous monitoring for production systems, tracking accuracy, drift, and fairness with alert thresholds. Build incident response procedures covering detection through resolution, including when to notify regulators. Test these procedures before you need them.

Step 6: Maintain ongoing compliance

Schedule regular reviews, reassessing systems against evolving requirements. Quarterly for high-risk and annually for lower-risk. Assign responsibility for tracking regulatory changes and build change management that loops back to step 2 whenever systems are modified. Platforms like Hyperproof automate evidence collection, track testing schedules, and maintain audit trails demonstrating continuous compliance over time.

What separates good AI compliance from great AI compliance?

The short answer? Good compliance checks the boxes. Great AI compliance embeds new checks and balances continuously throughout the GRC journey:

Protips for AI compliance

Embed AI compliance into development pipelines so non-compliant systems can’t deploy, rather than treating it as a pre-launch gate when fixes become expensive.

Generate documentation during development as an engineering byproduct, capturing decisions when made rather than reconstructing them months later for audits.

Map controls across frameworks to satisfy multiple requirements with single implementations, avoiding duplicate work across NIST AI RMF, EU AI Act, and ISO 42001.

Monitor emerging regulations and participate in code development to shape requirements before finalization, gaining adaptation time instead of scrambling post-deadline.

Distribute compliance accountability to teams building systems, making data scientists own fairness and engineers own explainability rather than centralizing in legal.

Use mandated bias testing to identify performance gaps and underserved segments, converting compliance work into product improvement rather than overhead.

Centralize operations in GRC platforms like Hyperproof that automate evidence collection and maintain cross-framework mappings instead of managing compliance through spreadsheets.

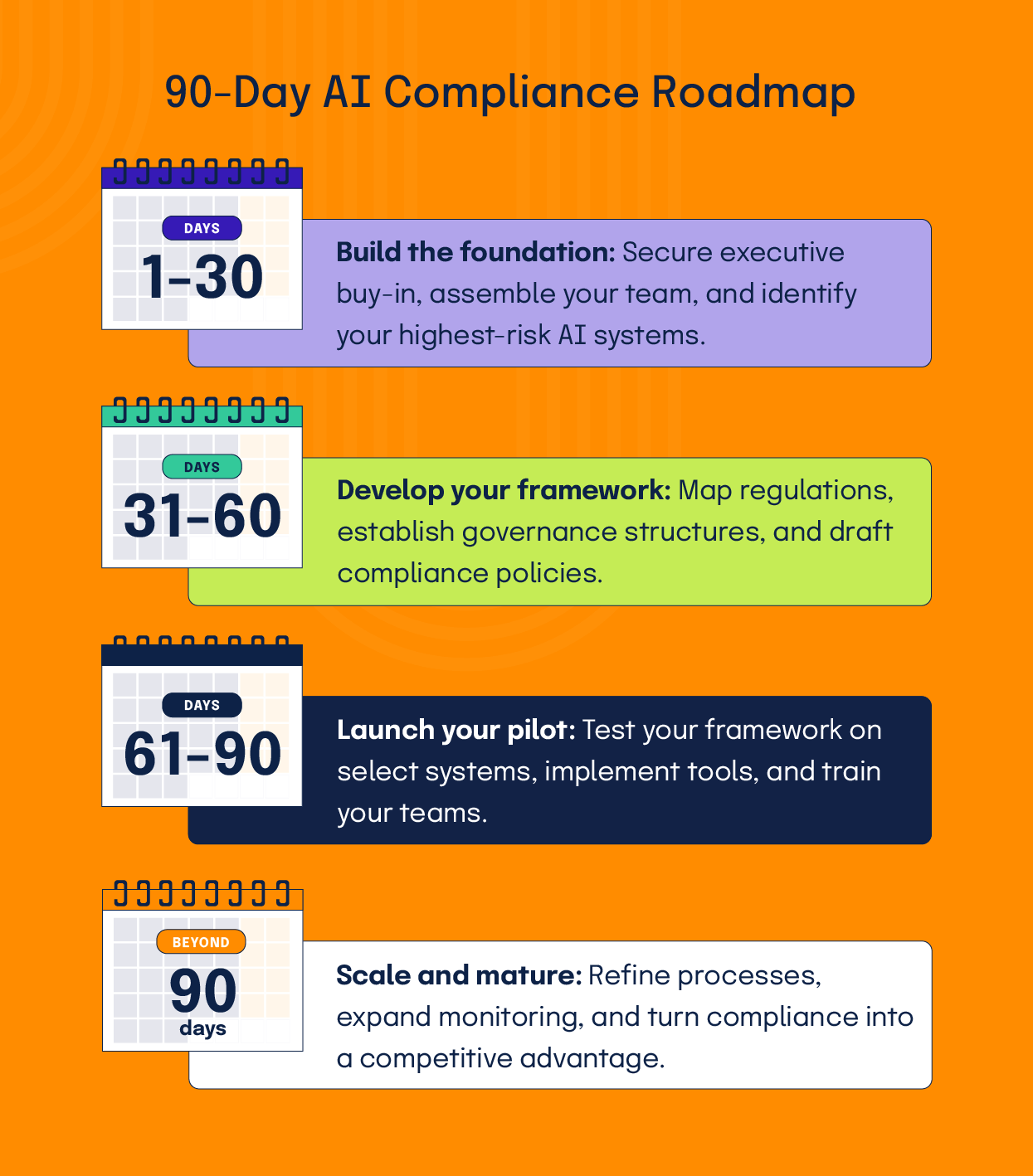

What actions can teams take to get started with AI compliance?

First 30 days: Build the foundation

The initial month focuses on securing organizational commitment and understanding what AI exists across your enterprise.

Action items for the first 30 days are as follows:

Deliverable at day 30: Executive briefing containing your AI inventory, initial risk assessment of high-priority systems, proposed governance structure, and resource requirements for the next phases.

Days 31-60: Develop your framework

Month two shifts to assessment and design, establishing the structures and policies your program will operate under.

Action items for days 31 to 60 are as follows:

Deliverable at day 60: Compliance roadmap with regulatory requirements mapped, gap analysis with prioritized actions, governance structure with defined roles, and draft policies ready for review.

Days 61-90: Launch your pilot

The third month moves from planning to execution through focused pilot implementations.

Action items for days 61 to 90 are as follows:

Deliverable at day 90: Pilot review results with lessons learned, refined policies based on experience, approved tooling plan, completed initial training, and enterprise communication materials.

Beyond 90 days: Scale and mature

Once foundational capabilities are in place, shift from implementation to improvement. Use what you learned during initial compliance work to refine approaches. Systems with strong outcomes become templates, and friction-causing processes get redesigned.

Maturity means treating compliance as a capability that improves over time. For this, expand monitoring beyond control existence to actual problem prevention and build feedback loops where compliance findings drive system improvements.

Organizations reaching this maturity find costs decrease while capabilities increase, turning regulatory burden into a competitive advantage.

Key takeaways

Hyperproof consolidates AI governance work into your existing GRC platform, so compliance teams can manage policies, assessments, and audit trails without adding separate tools. Get a demo to see how it fits your workflow.

See Hyperproof in Action

Related Resources

Ready to see

Hyperproof in action?