Managing AI Security Risks: Best Practices and Guidelines

AI has become a practical work tool across most business functions. From drafting content to automating repetitive tasks, the productivity case is straightforward, and adoption has followed quickly. Hyperproof’s 2026 IT Risk and Compliance Benchmark Report surveyed 1,002 GRC and cybersecurity professionals and found that 97% are already using AI in some form across their day-to-day workflows. That kind of adoption at scale brings a governance gap with it, and AI security risks are emerging from exactly that space.

AI tools are being used across teams and functions before security programs have had the chance to assess them, document them, or put appropriate controls in place. The data exposure and compliance implications that come with AI do not wait for governance to catch up.

Before getting into specific risks and what to do about them, it helps to first understand why AI creates a different kind of security challenge than what most programs were built to handle.

Why does AI demand a fresh look at security?

AI demands a fresh look at security because it breaks the foundational assumption most security programs were built on.

Traditional software behaves predictably. Given the same input, you get the same output. AI does not work that way. Generative AI and machine learning models produce outputs that are non-deterministic and context-dependent. In simple words, the same prompt (input) can yield different results depending on factors that are not always visible or controllable.

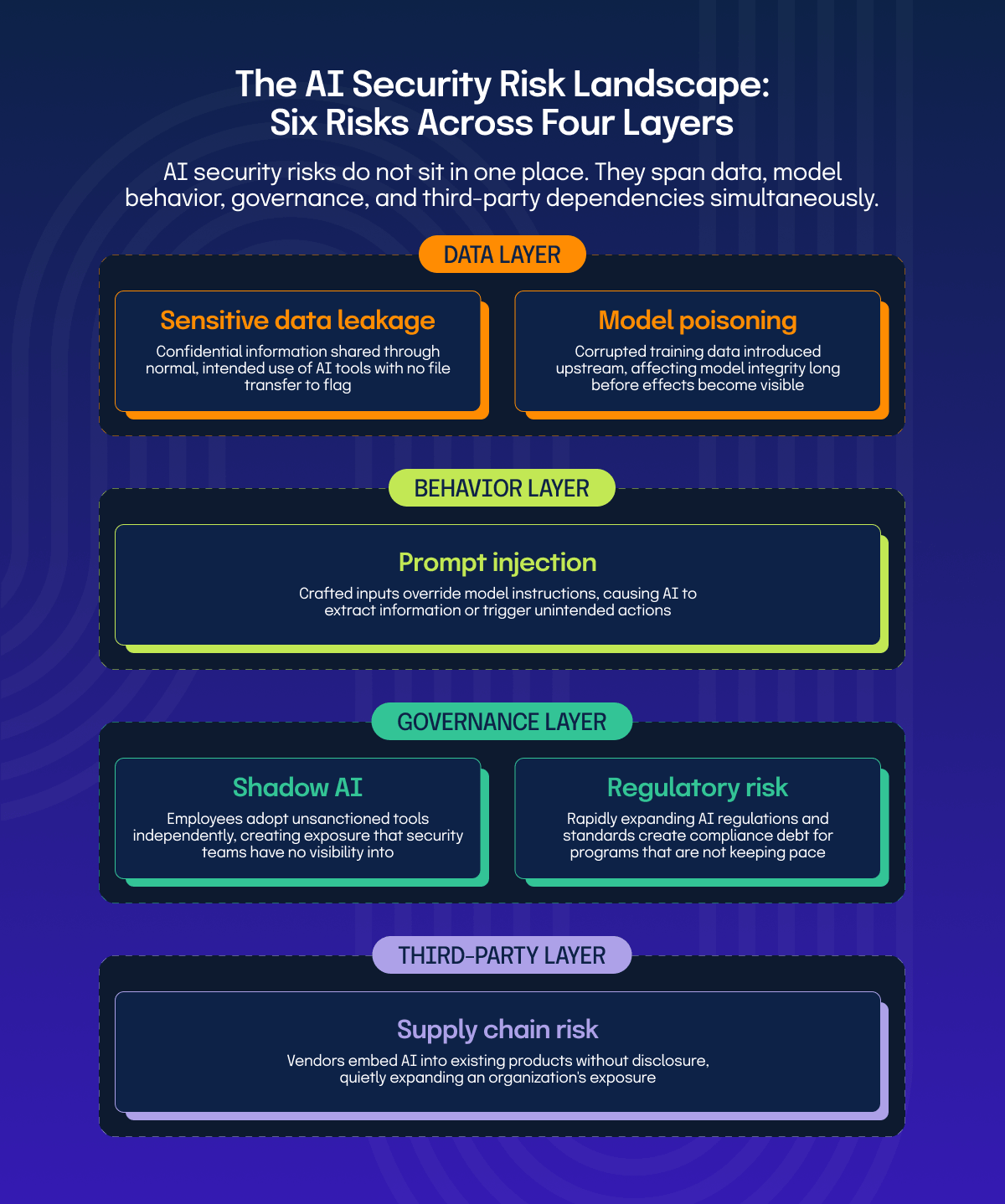

The attack surface itself looks different with AI in the picture. Where traditional software risk lives in code, configurations, and access points, AI introduces risk across training data, user inputs, model outputs, and the third-party APIs and business systems that those models connect to. Each of those layers carries its own exposure, and conventional controls were not built with any of them in mind.

Understanding where those risks actually show up is the starting point for managing them.

Common AI security risks organizations face today

Sensitive data leakage

When employees use AI tools to draft documents, summarize reports, or answer complex questions, they often include real business context in their prompts. That context frequently contains sensitive information: customer data, financial details, internal strategies, or personally identifiable information. Many AI tools, particularly consumer-grade ones, process and may retain that data outside the organization’s control.

Unlike traditional data loss scenarios, there is no file transfer to flag and no outbound email to intercept. The data leaves through the normal, intended use of the tool.

Shadow AI

When employees adopt AI tools independently without any formal evaluation, approval, or awareness from IT or security teams, it is commonly referred to as “Shadow AI.” This mirrors the shadow IT challenge organizations dealt with in the early 2010s, but the stakes are considerably higher. AI tools interact with data in ways that are far less transparent than a typical SaaS application.

Without an accurate picture of what AI tools are in use and how they are being used, exposure is harder to assess, and incidents are harder to contain.

Third-party and supply chain AI risk

AI risk does not stop at the tools an organization knowingly adopts. Vendors are increasingly embedding AI capabilities into existing products, sometimes without explicit disclosure or prominent documentation.

An organization’s risk exposure can quietly expand as the software it already relies on becomes AI-enabled. Most existing due diligence processes were not written with this in mind. The gaps show up in several places:

This makes third-party AI risk one of the more underassessed areas in any enterprise security program.

Prompt injection attacks

Prompt injection occurs when an attacker crafts inputs designed to override or manipulate an AI system’s instructions, causing it to behave in unintended ways. This can mean extracting information that the model was not supposed to share or triggering actions in systems the AI is connected to.

What makes this particularly difficult is that the attack surface is the natural language input itself, which is hard to validate or sanitize in the way traditional code inputs can be.

Model poisoning

Model poisoning happens when an attacker introduces corrupted or manipulated data into the training pipeline of an AI model. The goal is to influence how the model behaves, either broadly or in specific scenarios. For organizations building proprietary models or fine-tuning existing ones on internal data, this is a real integrity concern.

A poisoned model may produce subtly incorrect outputs, make biased decisions, or behave in ways that are difficult to trace back to the root cause. The challenge is that the compromise happens upstream, often long before the effects become visible.

Regulatory and compliance risk

The regulations around AI are moving faster than most compliance programs anticipated. Between 2024 and 2025 alone, 1,208 AI-related bills were introduced across US states, with 145 of them passing into law. And that is just one country. For teams that have not started mapping their AI practices to emerging requirements, the gap between the current state and what regulators will expect is quietly widening.

For security and compliance teams trying to build structure around AI governance, a few key regulations and standards are already shaping how that work gets done:

Getting ahead of these requirements is considerably less disruptive than addressing them under pressure.

Why are technical controls alone not enough?

Technical controls alone are not enough to manage AI security risks because most AI-related exposure does not come from unauthorized access. It comes from normal, intended use. An employee sharing sensitive data with an AI tool is not bypassing any control. A vendor quietly updating their product with an AI component is not triggering any alert. These are governance and visibility problems, and no security tool can solve them on its own.

Organizations that rely solely on technical controls will find themselves with significant blind spots. Knowing what AI tools are in use, who is using them, and whether that use aligns with policy requires processes and oversight mechanisms that no security tool can provide on its own.

That is where governance, risk, and compliance practices become not just useful but necessary.

Best practices for managing AI security risks

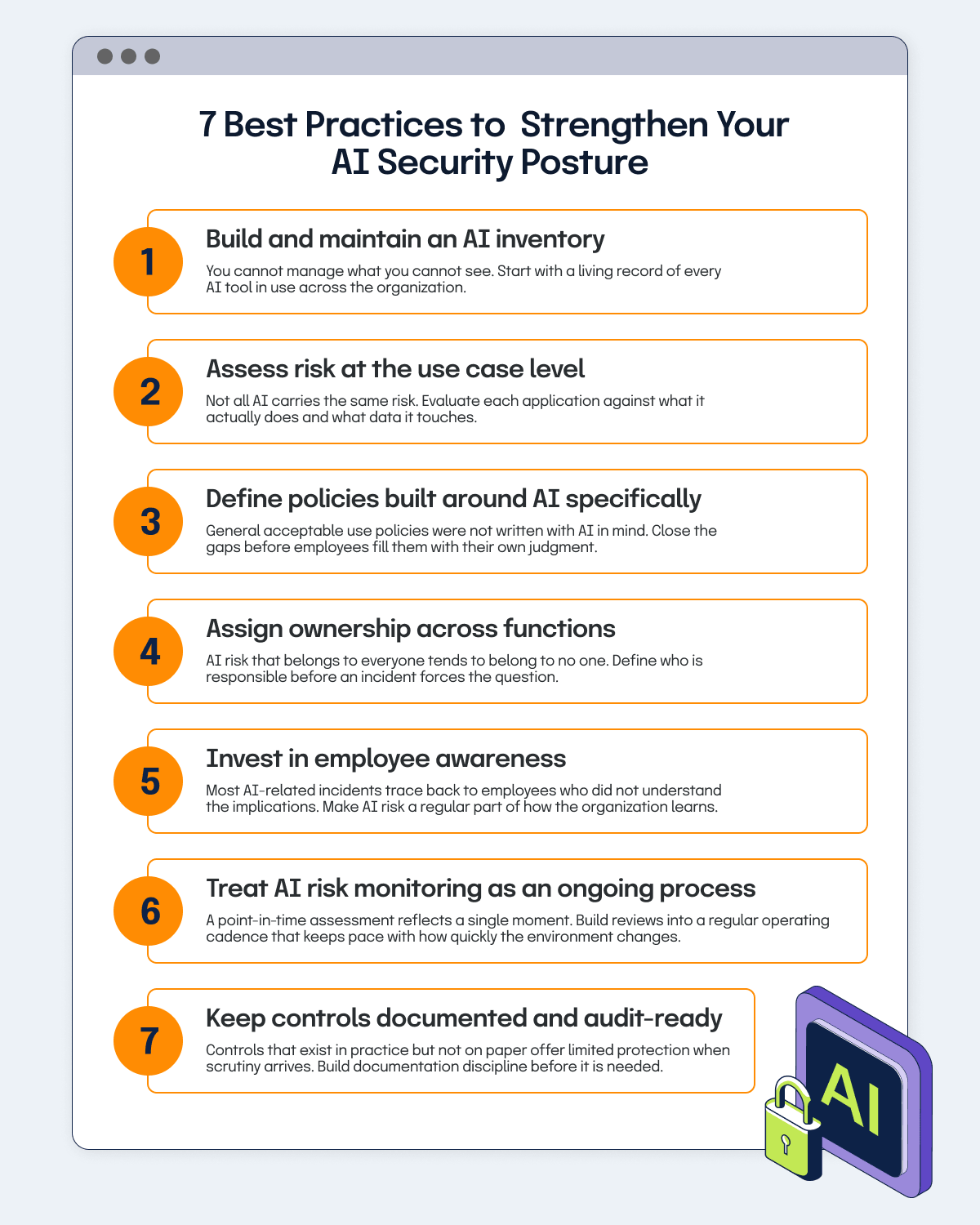

Addressing AI security risks effectively comes down to a few deliberate, repeatable practices that build visibility and control over time.

Build and maintain an AI inventory

Without a reliable inventory, every other risk management effort rests on incomplete information. Employees adopt tools independently, vendors embed AI into existing products, and the actual AI footprint across a business can grow faster than anyone is actively tracking. If you cannot see what is in use, you cannot assess it, govern it, or respond when something goes wrong.

Start by cataloging every AI tool in use, who is using it, what data it touches, and what purpose it serves. Treat this as a living record rather than a one-time exercise. It needs to stay current as tools change, new ones get introduced, and vendors quietly update products already in use across the business.

Assess risk at the use case level

Not all AI carries the same risk, and treating it uniformly leads to misallocated effort. Applying the same level of scrutiny to a meeting summary tool as to a model processing customer financial data creates unnecessary friction in low-risk areas while leaving high-risk ones under-examined. That misalignment is where exposure tends to build up unnoticed.

Once there is visibility into what is in use, evaluate each AI application against the risk it actually carries. Risk-rating at the use case level enables proportionate controls to be applied, review cycles to be prioritized, and defensible decisions to be made about what gets approved, what gets restricted, and what requires deeper assessment before deployment.

Define policies built around AI specifically

General acceptable use policies were not written with AI in mind, and the gaps show up quickly in practice. Without AI-specific guidance, employees fill the space with their own judgment about what is acceptable. That inconsistency creates exposure that is difficult to detect and harder to address after the fact.

Building an effective AI policy requires going beyond what existing guidelines cover. Here is where organizations can start:

Policy does not prevent every incident, but it draws a clear line and gives security teams something enforceable to work with when that line is crossed.

Assign ownership across functions

AI risk that belongs to everyone in theory tends to belong to no one in practice. Without explicit ownership, risk assessments happen inconsistently, policy enforcement falls through the cracks, and accountability gaps accumulate quietly across teams. Unaddressed ownership gaps are one of the more common reasons AI security risks go unmanaged for longer than they should.

Define who is responsible for AI risk before an incident forces the question. Two gaps that consistently create problems are:

Closing these gaps requires deliberate cross-functional alignment between security, legal, compliance, and business units, with documented responsibilities that do not shift every time there is a reorg.

Invest in employee awareness

Most AI-related security incidents do not trace back to malicious intent. They trace back to employees who did not understand the implications of how they were using a tool. AI tools are designed to be easy to use, and that ease can obscure the data exposure that comes with them.

Make AI risk a regular part of security awareness training rather than a one-off communication. To make it stick, training needs to go beyond checkbox compliance:

Without that consistency, policy awareness tends to fade quickly, and gaps reopen faster than they were closed.

Treat AI risk monitoring as an ongoing process

A point-in-time assessment reflects the state of an AI environment at a single moment. But that environment does not stay still. New tools get adopted, vendors update their products with new AI capabilities, regulations shift, and how employees use AI changes over time. The longer the gap between reviews, the wider the distance between what the risk picture shows and what is actually happening across the business.

Building AI risk reviews into a regular operating cadence closes that gap. In practice, that means:

Keep controls documented and audit-ready

As AI-specific regulatory requirements develop and auditors begin asking pointed questions about AI governance, controls that exist in practice but not on paper offer limited protection when scrutiny arrives. The time to build documentation discipline is before it is needed, not during an audit.

Document AI-related controls and maintain evidence of their operation as a matter of routine rather than audit preparation. In practice, this means:

As AI security risks become a formal part of regulatory and audit scope, that documentation will be the difference between an audit that goes smoothly and one that exposes gaps nobody had time to close

Taking a structured GRC approach to AI risk management with Hyperproof

The best practices covered above are only as effective as the system behind them. Without a structured way to track, manage, and report on AI-related risks and controls, even well-intentioned programs tend to fragment over time.

A structured GRC approach brings AI risk management out of isolated workflows and into a centralized, repeatable process that scales as the AI footprint grows. In practical terms, this means:

Operationalizing this kind of structure requires more than process design. It requires a platform that can hold it together consistently across teams, frameworks, and a regulatory landscape that is still taking shape. That is where Hyperproof comes in.

Centralizing risk visibility and control management

Hyperproof’s Risk Management software gives teams a structured way to identify, score, and track risks in a centralized risk register. Teams can define risk tolerance levels, assess inherent and residual risk for individual AI use cases, and link mitigating controls directly to those risks.

Because control health data integrates with the risk register in real time, security and compliance leaders always have a current view of where gaps remain and what is being done about them.

Staying aligned with AI governance frameworks and audit requirements

With support for over 140 compliance frameworks, including NIST AI RMF and ISO 42001, Hyperproof enables controls to be mapped across multiple frameworks simultaneously using a common controls approach.

Teams managing AI security risks across several regulatory requirements do not need to duplicate work for each one. Evidence collection is built into the workflow, and audit readiness is maintained on an ongoing basis rather than assembled under pressure.

Managing third-party and supply chain AI risk

Hyperproof’s Third-Party Risk Management software provides a centralized place to conduct vendor due diligence, manage assessments, and track vendor risk profiles over time. Key documents, renewal dates, and risk profiles are maintained in one place, making it easier to keep third-party AI oversight current rather than relying on annual questionnaire cycles that quickly become outdated.

For teams ready to move beyond spreadsheets and disconnected processes, book a demo to see how Hyperproof operationalizes AI risk management end to end.

See Hyperproof in Action

Related Resources

Ready to see

Hyperproof in action?