AI Risk Management Lawsuits: What They’ve Taught Us

Corporate investment in AI reached $252 billion in 2024, with private investment increasing 44% year-over-year as organizations embed intelligent systems deeper into core operations. That acceleration has been followed by a growing number of AI risk management lawsuits, signaling that legal exposure is emerging faster than most organizations can govern it.

While executive teams increasingly acknowledge the risk, cybersecurity and compliance functions remain unevenly prepared to manage it at scale. Board-level oversight of AI risk nearly tripled among Fortune 100 companies between 2024 and 2025, yet only 12% of organizations report feeling prepared to manage AI governance risks, and three out of four still lack a dedicated plan for generative AI.

As companies race to close this gap, courts are already answering foundational questions about how liability applies to AI systems in practice. Recent AI risk management lawsuits offer an early view into how judges assess responsibility when automated systems influence real-world outcomes.

This article examines seven landmark cases that show where legal exposure actually concentrates. Risk teams can use these signals to identify similar exposures in their own environments and address them before they become litigation problems.

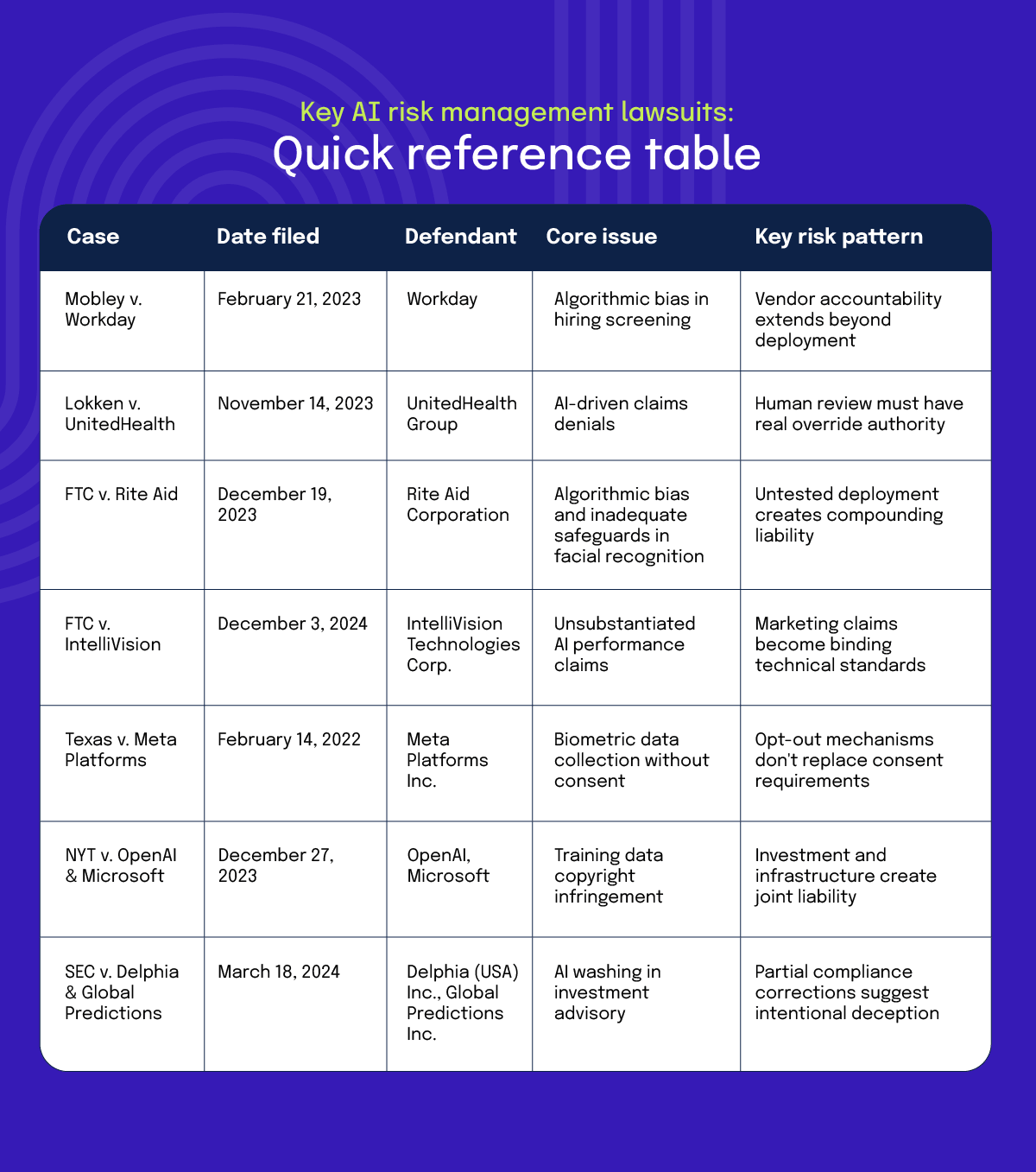

Key AI risk management lawsuits: Quick reference table

Lawsuit #1 Mobley v. Workday: Vendor AI bias liability

The case was filed in February 2023 by Derek Mobley, a job applicant, against Workday in a U.S. federal court in California. Mobley alleged that automated applicant screening systems used through Workday’s platform repeatedly rejected him from numerous positions, raising claims of discrimination based on race, age, and disability.

According to the complaint, Workday’s AI-powered tools use machine learning, personality assessments, and pymetrics to score, sort, rank, and screen applicants, with the algorithm participating meaningfully in hiring decisions rather than simply implementing employer criteria mechanically.

Accountability no longer stops at the point of deployment

In Mobley, the court did not limit its analysis to employers who used the hiring tools. It examined whether the system itself played a meaningful role in shaping outcomes. That framing breaks the assumption that accountability naturally ends with the organization deploying the technology.

When a system influences results in a material way, responsibility can extend to whoever designs, builds, or controls how it operates. This holds whether the technology is supplied by a vendor or developed internally.

The practical boundary is influence, not ownership. If an AI system recommends which candidates advance, determines eligibility thresholds, or filters applications before human review, it crosses from neutral tool to active participant. Vendors cannot rely on contractual distance from hiring decisions if their systems materially shape who gets considered.

AI is now treated like any other high-impact operational control

This case proved that internal audits, bias testing, and impact assessments are no longer optional risk mitigation strategies. They are evidentiary requirements that will determine whether an organization can demonstrate business necessity if challenged.

The disparate impact framework allows plaintiffs to challenge facially neutral practices that disproportionately harm protected groups. The claims survived because the complaint plausibly alleged a unified discriminatory policy, supported by academic literature on algorithmic bias and examples like Amazon’s abandoned hiring algorithm that disadvantaged female candidates.

The third category of AI risk management lawsuits involves automated decision-making in regulated industries. Healthcare claims processing provides a clear example.

Lawsuit #2 Estate of Gene B. Lokken v. UnitedHealth Group: Wrongful AI claims denials

The lawsuit was brought by the estate of Gene B. Lokken against UnitedHealth Group, following the denial of extended post-acute care coverage. The claims focused on the use of an automated system to assess medical necessity for continued treatment.

According to the complaint, coverage determinations relied heavily on algorithmic assessments that conflicted with treating physicians’ recommendations. The central issue was not whether automation existed, but how much weight it carried in final decisions. Clinical judgment remained part of the process, yet the system’s output effectively set the boundaries of what care would be approved.

Human involvement does not equate to human control

A central issue raised by the case was whether human review retained real authority. When automated recommendations function as defaults and human input mainly affirms system output, oversight becomes procedural rather than substantive.

This distinction matters in regulated environments like insurance, and it has real implications for security and compliance teams. The question is not whether humans are involved, but whether they can actually change outcomes once the system sets limits. Audit trails need to show not just that someone reviewed a decision, but that they had the ability to override it.

Risk emerges when systems set enforceable limits

Risk emerges when systems set enforceable limits rather than provide recommendations. In that configuration, risk arises through:

When technology constrains outcomes rather than informing human judgment, oversight expectations rise accordingly. The distinction matters: recommendation systems supplement decision-making, while enforcement systems replace it.

Lawsuit #3 FTC v. Rite Aid: Algorithmic bias and inadequate safeguards

Rite Aid deployed facial recognition in hundreds of stores from 2012 to 2020 to identify potential shoplifters, comparing live camera feeds against a database of security footage and employee phone photos. The FTC’s complaint alleged that the system generated thousands of false matches, performed worse for Black, Latino, Asian, and women customers.

The FTC’s December 2023 lawsuit — its first enforcement action for allegedly biased AI use — resulted in a settlement banning Rite Aid from facial recognition for five years, requiring deletion of all biometric data and algorithms, and mandating bias monitoring for future deployments.

Untested AI systems create compounding liability

Customers falsely matched by the system were followed through stores, publicly accused of shoplifting, searched, and in some cases removed by police based on algorithmic outputs the company never verified. The failures traced back to a fundamental problem. Rite Aid had built its database from tens of thousands of low-quality images captured on security cameras and employee phones, pushed employees to enroll as many people as possible, and then deployed the matching system without any accuracy testing.

The case establishes that courts can treat an untested deployment as evidence of negligence. When organizations skip validation and monitoring before production deployment, they create documentation gaps that become critical evidence in litigation.

Vendor contracts cannot disclaim accuracy responsibilities

Rite Aid contracted with two vendors to provide facial recognition technology. Both vendor contracts explicitly disclaimed any warranty regarding the accuracy or reliability of results. Rite Aid relied on those disclaimers rather than conducting its own testing. The FTC rejected this approach entirely.

Organizations deploying vendor AI cannot hide behind contractual disclaimers when the technology harms consumers. The duty to test, monitor, and ensure reasonable accuracy remains with the deploying organization regardless of what vendor contracts say.

This doesn’t absolve vendors of their own liability — as the NYT case against Microsoft demonstrates, infrastructure providers and partners can face liability too — but it establishes that deployers cannot use vendor relationships as a shield.

Lawsuit #4 FTC v. IntelliVision Technologies: Unsubstantiated AI performance claims

IntelliVision Technologies sells facial recognition software for home security systems. The company marketed its product as having one of the highest accuracy rates available, trained on millions of faces from diverse populations, and performing with zero racial or gender bias.

When the FTC investigated these claims, it found the company lacked supporting evidence. The FTC filed charges in December 2024, and the case settled within weeks. The consent order prohibits IntelliVision from making representations about its facial recognition technology’s accuracy, bias characteristics, or effectiveness for 20 years unless the company first conducts and relies on competent and reliable testing.

Marketing claims create binding technical standards

When AI vendors make specific performance claims, those claims become testable standards that regulators will enforce. IntelliVision advertised that its software could “detect faces of all ethnicities, without racial bias” and had “zero gender or racial bias through model training with millions of faces from datasets from around the world.” The FTC required the company to prove these claims with actual testing data.

The company could not. IntelliVision trained its facial recognition software on images of approximately 100,000 unique individuals, then created synthetic variants of those images, falling far short of the “millions of faces” it claimed.

This enforcement action clarifies what due diligence can look like for AI procurement. Vendor claims about accuracy, bias, or performance should trigger requests for the underlying test data. Security teams evaluating AI vendors should treat performance claims the same way they treat security certifications: verify the testing methodology, review the actual results, and document the validation process.

“Bias-free” claims require defining what bias means

The FTC’s action against IntelliVision highlights a problem many AI vendors face: making claims about “bias” without defining the term. IntelliVision advertised zero gender or racial bias, but never specified what metrics it used to measure bias or what threshold it considered acceptable.

Commissioner Ferguson’s concurring statement emphasized that when companies use ambiguous terms like “bias-free,” they bear the burden of substantiating all reasonable interpretations consumers might apply to that claim. This means organizations cannot make vague fairness assertions without specific, measurable definitions backed by testing.

Lawsuit #5 Texas v. Meta Platforms: Consent requirements for biometric collection

Texas Attorney General Ken Paxton sued Meta Platforms in February 2022, alleging the company violated state biometric privacy law by collecting facial geometry data from millions of Texans without consent through its Tag Suggestions feature.

Meta had rolled out the feature in 2011, automatically enabling it for all users to identify people in uploaded photos and extract facial geometry records, running the technology on virtually every face uploaded to Facebook for over a decade without informing users or obtaining required consent.

The case was settled in July 2024 for $1.4 billion, the largest privacy settlement ever obtained by a single state.

Opt-out mechanisms do not replace consent requirements

Automatically enrolling users in biometric systems and offering opt-out options does not satisfy legal consent requirements. Texas law requires businesses to inform individuals and obtain their consent before capturing biometric identifiers. The distinction between opt-in and opt-out matters because biometric data is permanent and cannot be changed if compromised like a password.

Security teams building authentication systems should implement affirmative consent flows that explain what biometric data will be collected, how it will be used, and how long it will be retained before users can proceed. Courts can treat automatic enrollment with an opt-out option as a failure to obtain consent, not as evidence of user choice.

State biometric privacy laws create significant enforcement risk

Texas passed CUBI in 2009, but the Meta settlement represents the first lawsuit brought and first settlement obtained under the statute. The $1.4 billion settlement dwarfs the $390 million that 40 states collectively obtained from Google in 2022, demonstrating that single-state enforcement can produce larger penalties than multi-state actions.

Organizations operating across states need visibility into varying biometric privacy requirements. Some states require explicit consent, others mandate data retention schedules, and several impose destruction requirements when biometric data is no longer needed.

Security teams should maintain a compliance matrix showing which state laws apply to their operations and what controls are required in each jurisdiction.

Lawsuit #6 The New York Times v. OpenAI and Microsoft: Training Data Copyright Infringement

The New York Times sued OpenAI and Microsoft in December 2023, alleging OpenAI used millions of copyrighted Times articles without authorization to train ChatGPT, creating models that can reproduce substantial portions of Times content as a free substitute for paid subscriptions. Microsoft was named as a defendant based on its investment in OpenAI and the provision of computing infrastructure.

In April 2025, Judge Sidney Stein allowed the main copyright infringement claims to proceed to discovery, and in June 2025 upheld Judge Wang’s order requiring OpenAI to preserve all output logs, including deleted user conversations, to determine whether models reproduce Times content.

Fair use assumptions cannot substitute for copyright clearance for now

OpenAI argues training is transformative because models learn patterns rather than storing originals. The Times argues this directly competes with its business by letting users access content without subscriptions.

If fair use fails, AI companies cannot rely on this defense for training on copyrighted works without permission. If it prevails, current industry practice is validated, but questions remain about commercial use boundaries.

Investment and infrastructure can create joint liability exposure

Microsoft was named as a defendant despite being one step removed from development. The Times claimed Microsoft bears vicarious and contributory liability through:

The court allowed these claims to proceed, finding partnership structures and profit-sharing can establish liability.

Lawsuit #7 SEC v. Delphia and Global Predictions: AI washing in investment advisory

The Securities and Exchange Commission filed these lawsuits in March 2024 against two investment advisers, Delphia (USA) Inc. and Global Predictions Inc., for making false and misleading statements about their use of artificial intelligence. The SEC alleged both companies violated the Investment Advisers Act of 1940, specifically Section 206(2), Section 206(4), and the Marketing Rule, by advertising AI capabilities they did not possess.

Both cases settled immediately, with Delphia paying $225,000 and Global Predictions paying $175,000, and both firms ordered to cease violations, implement compliance policies, and substantiate future AI claims with reliable testing, marking the SEC’s first enforcement actions explicitly targeting “AI washing.”

Fixing regulatory filings doesn’t resolve misleading marketing materials

When SEC examiners questioned Delphia in July 2021, the company acknowledged it had never built the AI system it described in public statements. Delphia updated its formal regulatory disclosures in August 2021 to reflect this reality.

The company then spent the next two years making identical false claims in client communications, social media content, and even a press release. The last misleading statement appeared in August 2023.

The SEC treated this as a continuing violation, establishing that organizations cannot selectively correct some channels while leaving others unchanged. Regulatory filings, marketing materials, websites, and client communications must all be updated together. Partial corrections suggest intentional deception rather than good-faith error.

Organizations need proof in hand before publishing AI claims

The SEC enforcement actions didn’t evaluate whether the technologies Delphia and Global Predictions described would meet any technical definition of artificial intelligence. The investigation centered on a simpler question: can you prove what you claimed?

Delphia said it analyzed client data with machine learning. Global Predictions said it was the first regulated AI advisor. When asked to provide supporting evidence, neither company had any.

Organizations making public statements about AI face an evidentiary standard. Claims about what systems do, how they work, or what makes them unique require documentation created before the claim appears in public. This can include:

These materials should exist at the time claims are published, rather than being compiled retroactively in response to inquiries.

How to protect your business against emerging threats from AI technologies

These AI risk management lawsuits reveal what courts examine most closely when assigning liability. Below are some strategies you can use to address the most common exposure points.

Manage AI systems like security infrastructure

Security teams already know how to classify databases, applications, and network assets by risk level. AI systems should get the same treatment. Classify them by risk tier using the same approach applied to data classification policies.

The challenge is that AI often gets tracked separately from other operational risks, in spreadsheets or standalone documents disconnected from existing workflows. When AI risk lives in isolation, it becomes difficult to understand how new deployments affect overall security posture or ensure accountability when issues arise.

Teams using platforms like Hyperproof can document AI systems in the same risk register they use for other enterprise risks, assigning owners and linking controls in a unified view.

As new AI capabilities deploy or existing ones get adjusted, the risk register updates in real time, showing how these changes affect the organization’s risk profile. This eliminates the fragmentation that comes from managing AI separately and ensures someone actually owns the outcome when things go wrong.

Embed AI governance into existing security and GRC processes

AI risk spans multiple domains simultaneously: employment decisions, product safety, intellectual property, data privacy, and more. Organizations that create standalone AI governance programs often build systems teams that route around under pressure because they exist outside normal workflows.

The more effective approach incorporates AI-specific controls into frameworks already in use, like Service Organization Controls (SOC 2®), NIST Cybersecurity Framework (CSF), or ISO 27001. Rather than building parallel compliance structures, organizations can leverage framework mapping to show how a single AI control satisfies multiple requirements across different standards.

Hyperproof’s Jumpstart feature automates this mapping, letting teams demonstrate compliance without duplicating documentation or control testing.

For organizations implementing AI-specific frameworks, Hyperproof also supports emerging standards like NIST AI Risk Management Framework (RMF) and ISO 42001 out of the box or allows teams to create custom frameworks for internal AI governance requirements.

When AI governance operates within established processes rather than as an add-on, it survives organizational pressure and competing priorities.

Map liability exposure across your entire vendor ecosystem

As AI risk management lawsuits targeting vendors increase, organizations need visibility into their entire ecosystem. Hyperproof’s Third-Party Risk Management module centralizes vendor tracking, automatically linking vendor assessments to related controls and organizational risks.

When a cloud provider updates its AI infrastructure or a new data vendor enters the supply chain, teams can immediately see how those changes affect the overall risk without rebuilding spreadsheets or hunting through email threads for the latest contract terms.

Reduce blind reliance on automated outputs

Organizations need intervention capability with clear authority to override AI recommendations and documented evidence that human judgment occurred at important decision points.

Security teams need to put basic controls in place that match the risks these cases reveal. Access controls determine who can change AI models in production systems. Logging tracks what the AI decided, why it decided that way, and when someone overrode it. This logging should connect to the monitoring tools security teams already use. Separating training systems from production systems reduces the risk that bad data affects live decisions.

Task management integrated with risk workflows helps here. Hyperproof can create approval gates for high-risk decisions and maintain audit trails showing not just that review happened but when, by whom, and with what outcome.

As AI systems scale and generate more outputs requiring oversight, these automated workflows ensure the governance structure scales alongside the technology rather than breaking under pressure.

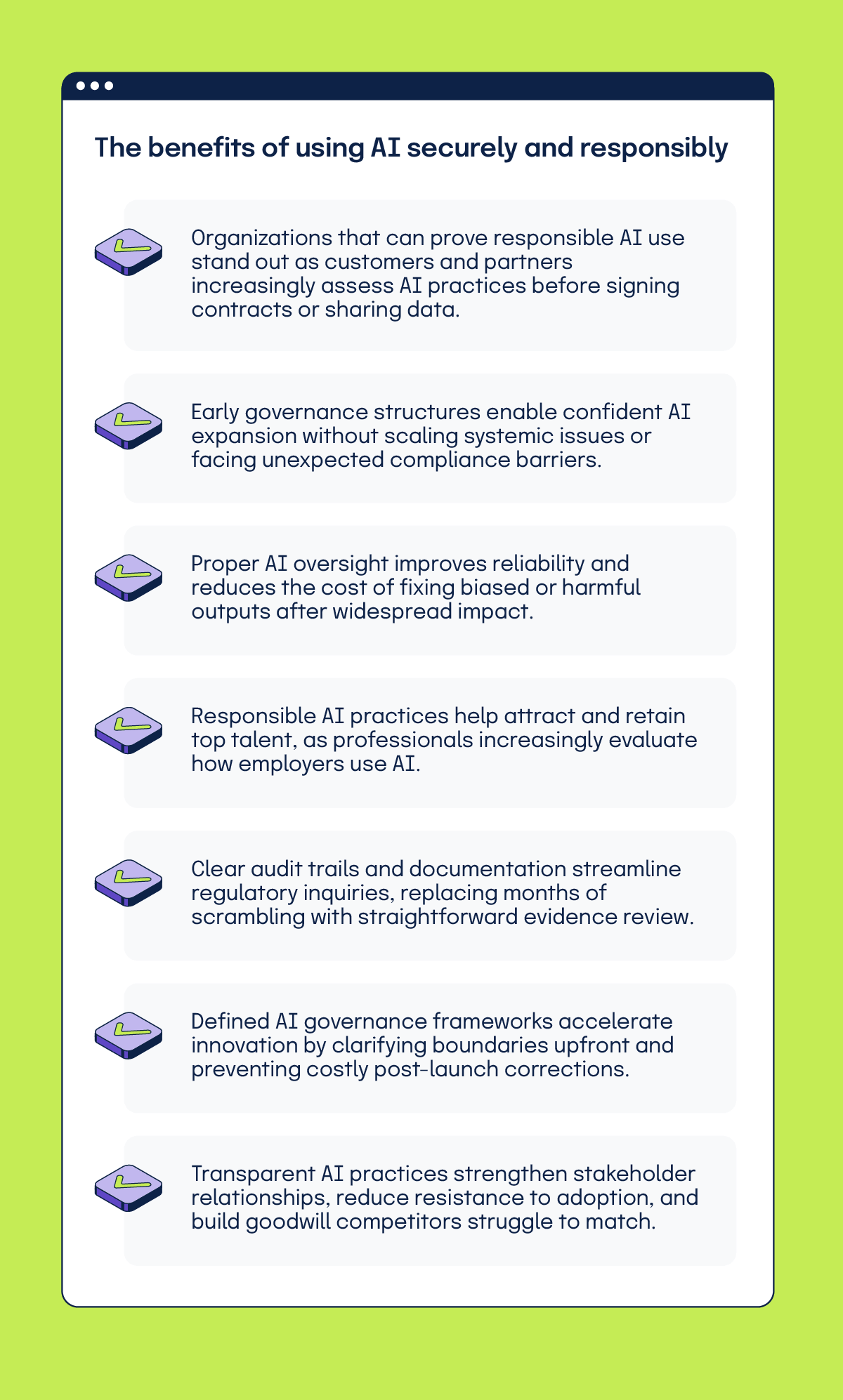

What are the benefits of using AI securely and responsibly?

Check your AI governance readiness with our downloadable scorecard

TL;DR: Protecting against AI risk management lawsuits

Hyperproof helps enterprise security and compliance teams bring AI systems into the same risk registers, compliance frameworks, and control libraries they use for everything else. This creates unified visibility across technical controls and governance requirements without duplicating effort across tools or teams.

Book a demo to see how centralized governance reduces your AI risk surface.

See Hyperproof in Action

Related Resources

Ready to see

Hyperproof in action?